PyTorch implementation of MV-VTON: Multi-View Virtual Try-On with Diffusion Models

- 🔥The first multi-view virtual try-on dataset MVG is now available.

- 🔥Checkpoints on both frontal-view and multi-view virtual try-on tasks are released.

Abstract:

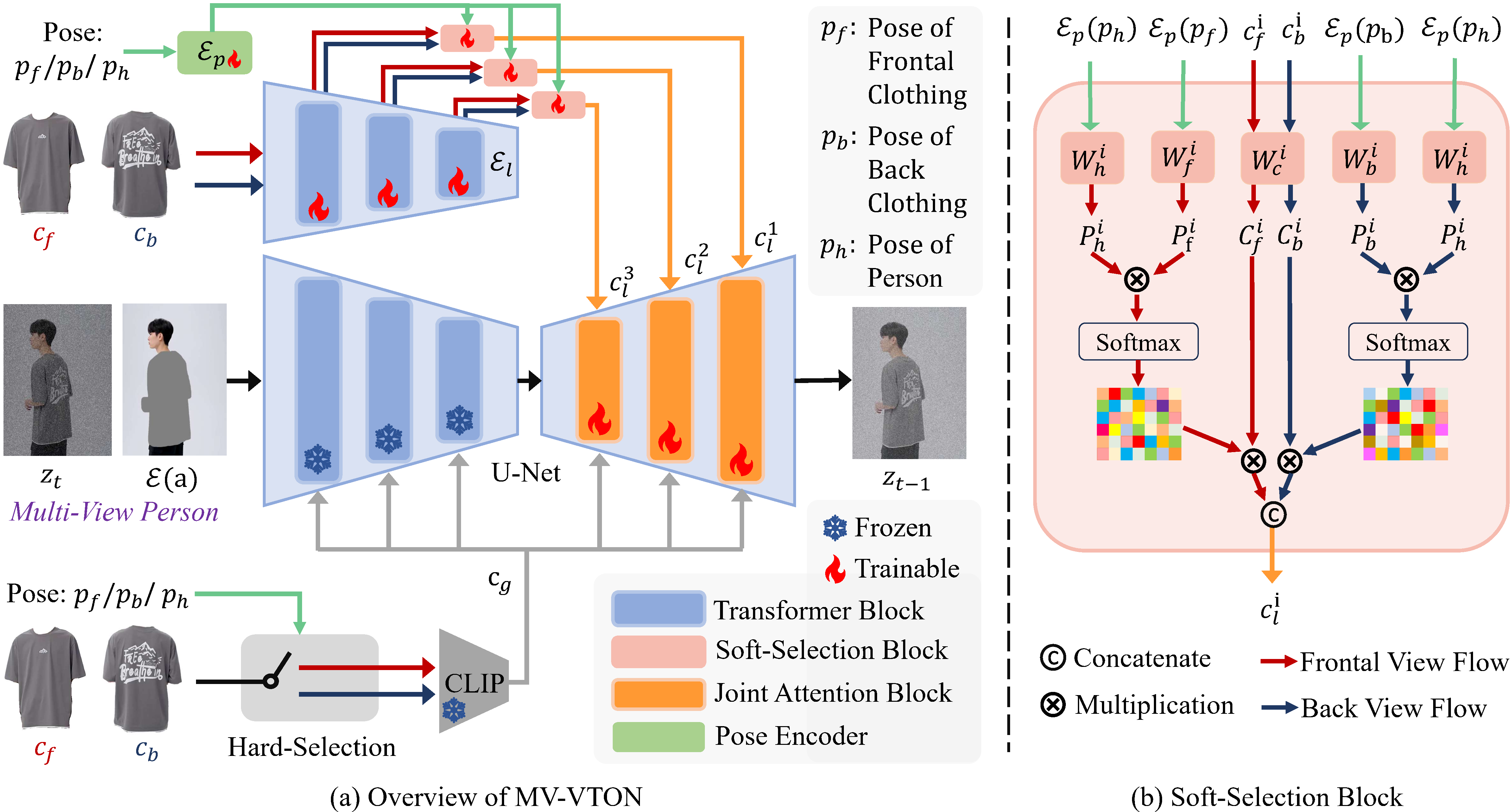

The goal of image-based virtual try-on is to generate an image of the target person naturally wearing the given clothing. However, most existing methods solely focus on the frontal try-on using the frontal clothing. When the views of the clothing and person are significantly inconsistent, particularly when the person’s view is non-frontal, the results are unsatisfactory. To address this challenge, we introduce Multi-View Virtual Try-ON (MV-VTON), which aims to reconstruct the dressing results of a person from multiple views using the given clothes. On the one hand, given that single-view clothes provide insufficient information for MV-VTON, we instead employ two images, i.e., the frontal and back views of the clothing, to encompass the complete view as much as possible. On the other hand, the diffusion models that have demonstrated superior abilities are adopted to perform our MV-VTON. In particular, we propose a view-adaptive selection method where hard-selection and soft-selection are applied to the global and local clothing feature extraction, respectively. This ensures that the clothing features are roughly fit to the person’s view. Subsequently, we suggest a joint attention block to align and fuse clothing features with person features. Additionally, we collect a MV-VTON dataset, i.e., Multi-View Garment (MVG), in which each person has multiple photos with diverse views and poses. Experiments show that the proposed method not only achieves state-of-the-art results on MV-VTON task using our MVG dataset, but also has superiority on frontal-view virtual try-on task using VITON-HD and DressCode datasets.

- Clone the repository

git clone https://github.com/hywang2002/MV-VTON.git

cd MV-VTON- Install Python dependencies

conda env create -f environment.yaml

conda activate mv-vton- Download the pretrained vgg

checkpoint and put it in

models/vgg/for Multi-View VTON andFrontal-View VTON/models/vgg/for Frontal-View VTON. - Download the pretrained models

mvg.ckptvia Baidu Cloud or Google Drive, andvitonhd.ckptvia Baidu Cloud or Google Drive, and putmvg.ckptincheckpoint/and putvitonhd.ckptinFrontal-View VTON/checkpoint/.

- Fill

Dataset Request Formvia Baidu Cloud or Google Drive, and contact[email protected]with this form to get MVG dataset ( Non-institutional emails (e.g. gmail.com) are not allowed. Please provide your institutional email address.).

After these, the folder structure should look like this (the warp_feat_unpair* only included in test directory):

├── MVG

| ├── unpaired.txt

│ ├── [train | test]

| | ├── image-wo-bg

│ │ ├── cloth

│ │ ├── cloth-mask

│ │ ├── warp_feat

│ │ ├── warp_feat_unpair

│ │ ├── ...

- Download VITON-HD dataset

- Download pre-warped cloth image/mask via Baidu Cloud or Google Drive and put it under VITON-HD dataset.

After these, the folder structure should look like this (the unpaired-cloth* only included in test directory):

├── VITON-HD

| ├── test_pairs.txt

| ├── train_pairs.txt

│ ├── [train | test]

| | ├── image

│ │ │ ├── [000006_00.jpg | 000008_00.jpg | ...]

│ │ ├── cloth

│ │ │ ├── [000006_00.jpg | 000008_00.jpg | ...]

│ │ ├── cloth-mask

│ │ │ ├── [000006_00.jpg | 000008_00.jpg | ...]

│ │ ├── cloth-warp

│ │ │ ├── [000006_00.jpg | 000008_00.jpg | ...]

│ │ ├── cloth-warp-mask

│ │ │ ├── [000006_00.jpg | 000008_00.jpg | ...]

│ │ ├── unpaired-cloth-warp

│ │ │ ├── [000006_00.jpg | 000008_00.jpg | ...]

│ │ ├── unpaired-cloth-warp-mask

│ │ │ ├── [000006_00.jpg | 000008_00.jpg | ...]

To test on paired settings (using cp_dataset_mv_paired.py), you can modify the configs/viton512.yaml and main.py,

or directly rename cp_dataset_mv_paired.py to cp_dataset.py (recommended). Then run:

sh test.shTo test on unpaired settings, rename cp_dataset_mv_unpaired.py to cp_dataset.py, and do the same operation.

To test on paired settings, input command cd Frontal-View\ VTON/, then directly run:

sh test.shTo test on unpaired settings, input command cd Frontal-View\ VTON/, add --unpaired to test.sh, add then run:

sh test.shWe compute LPIPS, SSIM, FID, KID using the same tools in LaDI-VTON.

We use Paint-by-Example as initialization, please download the pretrained model

from Google Drive and save the model to

directory checkpoints. Rename cp_dataset_mv_paired.py to cp_dataset.py, then run:

sh train.shInput command cd Frontal-View\ VTON/, then directly run:

sh train.shOur code is heavily borrowed from Paint-by-Example and DCI-VTON. We also thank previous work PF-AFN, GP-VTON, LaDI-VTON and StableVITON.

MV-VTON: Multi-View Virtual Try-On with Diffusion Models © 2024 by Haoyu Wang, Zhilu Zhang, Donglin Di, Shiliang Zhang, Wangmeng Zuo is licensed under CC BY-NC-SA 4.0

@article{wang2024mv,

title={MV-VTON: Multi-View Virtual Try-On with Diffusion Models},

author={Wang, Haoyu and Zhang, Zhilu and Di, Donglin and Zhang, Shiliang and Zuo, Wangmeng},

journal={arXiv preprint arXiv:2404.17364},

year={2024}

}