Pytorch reimplementation of Google's repository for the ViT model that was released with the paper An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale by Alexey Dosovitskiy, Lucas Beyer, Alexander Kolesnikov, Dirk Weissenborn, Xiaohua Zhai, Thomas Unterthiner, Mostafa Dehghani, Matthias Minderer, Georg Heigold, Sylvain Gelly, Jakob Uszkoreit, Neil Houlsby.

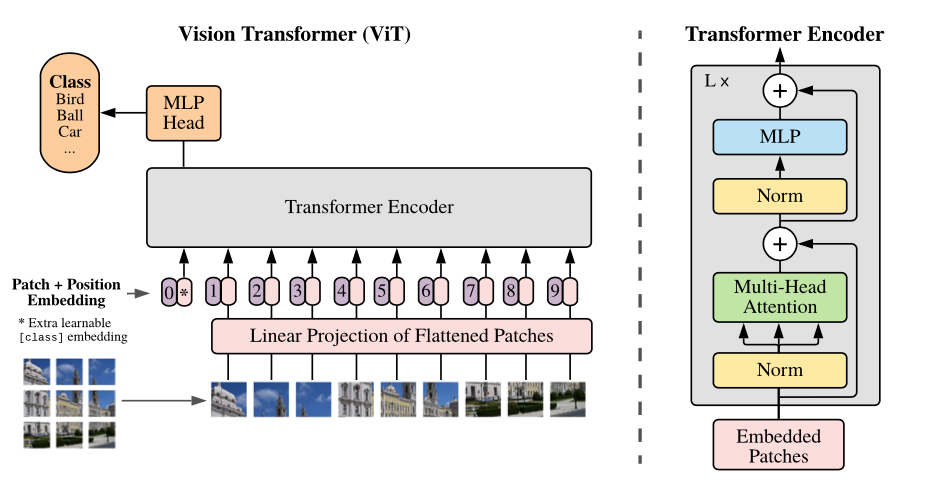

This paper show that Transformers applied directly to image patches and pre-trained on large datasets work really well on image recognition task.

Vision Transformer achieve State-of-the-Art in image recognition task with standard Transformer encoder and fixed-size patches. In order to perform classification, author use the standard approach of adding an extra learnable "classification token" to the sequence.

- Available models: ViT-B_16(85.8M), ViT-B_32(87.5M), ViT-L_32(305.5M), ViT-H_14(630.8M)

- imagenet21k pre-train: ViT-B_16, ViT-B_32, ViT-L_32, ViT-H_14

- imagenet21k pre-train + imagenet2012 fine-tuning: ViT-B_16-224, ViT-B_16, ViT-B_32, ViT-L_16-224, ViT-L_16, ViT-L_32

# imagenet21k pre-train

wget https://storage.googleapis.com/vit_models/imagenet21k/{MODEL_NAME}.npz

# imagenet21k pre-train + imagenet2012 fine-tuning

wget https://storage.googleapis.com/vit_models/imagenet21k+imagenet2012/{MODEL_NAME}.npz

python3 train.py --name cifar10-100_500 --dataset cifar10 --model_type ViT-B_16 --pretrained_dir checkpoint/ViT-B_16.npz

CIFAR-10 and CIFAR-100 are automatically download and train. In order to use a different dataset you need to customize data_utils.py.

The default batch size is 512. When GPU memory is insufficient, you can proceed with training by adjusting the value of --gradient_accumulation_steps.

Also can use Automatic Mixed Precision(Amp) to reduce memory usage and train faster

python3 train.py --name cifar10-100_500 --dataset cifar10 --model_type ViT-B_16 --pretrained_dir checkpoint/ViT-B_16.npz --fp16 --fp16_opt_level O2

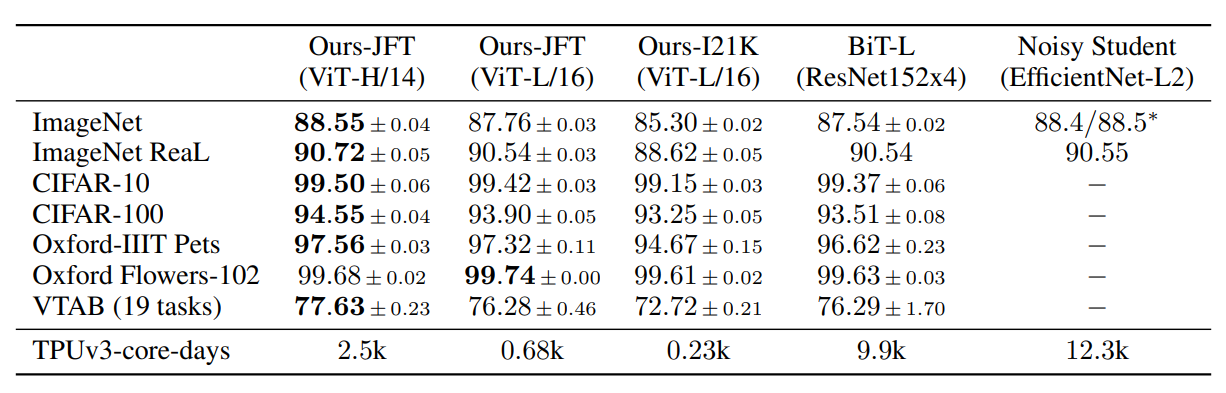

To verify reproducibility, we simply compare it with the author's experimental results. We trained using mixed precision, and --fp16_opt_level was set to O2.

| upstream | model | dataset | total_steps /warmup_steps | acc(official) | acc(this repo) |

|---|---|---|---|---|---|

| imagenet21k | ViT-B_16 | CIFAR-10 | 500/100 | 0.9859 | 0.9869 |

| imagenet21k | ViT-B_16 | CIFAR-10 | 1000/100 | 0.9886 | 0.9878 |

| imagenet21k | ViT-B_16 | CIFAR-100 | 500/100 | 0.8917 | 0.9205 |

| imagenet21k | ViT-B_16 | CIFAR-100 | 1000/100 | 0.9115 | 0.9256 |

@article{dosovitskiy2020,

title={An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale},

author={Dosovitskiy, Alexey and Beyer, Lucas and Kolesnikov, Alexander and Weissenborn, Dirk and Zhai, Xiaohua and Unterthiner, Thomas and Dehghani, Mostafa and Minderer, Matthias and Heigold, Georg and Gelly, Sylvain and Uszkoreit, Jakob and Houlsby, Neil},

journal={arXiv preprint arXiv:2010.11929},

year={2020}

}