🎉 Update: JaxMARL was accepted at NeurIPS 2024 on Datasets and Benchmarks Track. See you in Vacouver!

JaxMARL combines ease-of-use with GPU-enabled efficiency, and supports a wide range of commonly used MARL environments as well as popular baseline algorithms. Our aim is for one library that enables thorough evaluation of MARL methods across a wide range of tasks and against relevant baselines. We also introduce SMAX, a vectorised, simplified version of the popular StarCraft Multi-Agent Challenge, which removes the need to run the StarCraft II game engine.

For more details, take a look at our blog post or our Colab notebook, which walks through the basic usage.

| Environment | Reference | README | Summary |

|---|---|---|---|

| 🔴 MPE | Paper | Source | Communication orientated tasks in a multi-agent particle world |

| 🍲 Overcooked | Paper | Source | Fully-cooperative human-AI coordination tasks based on the homonyms video game |

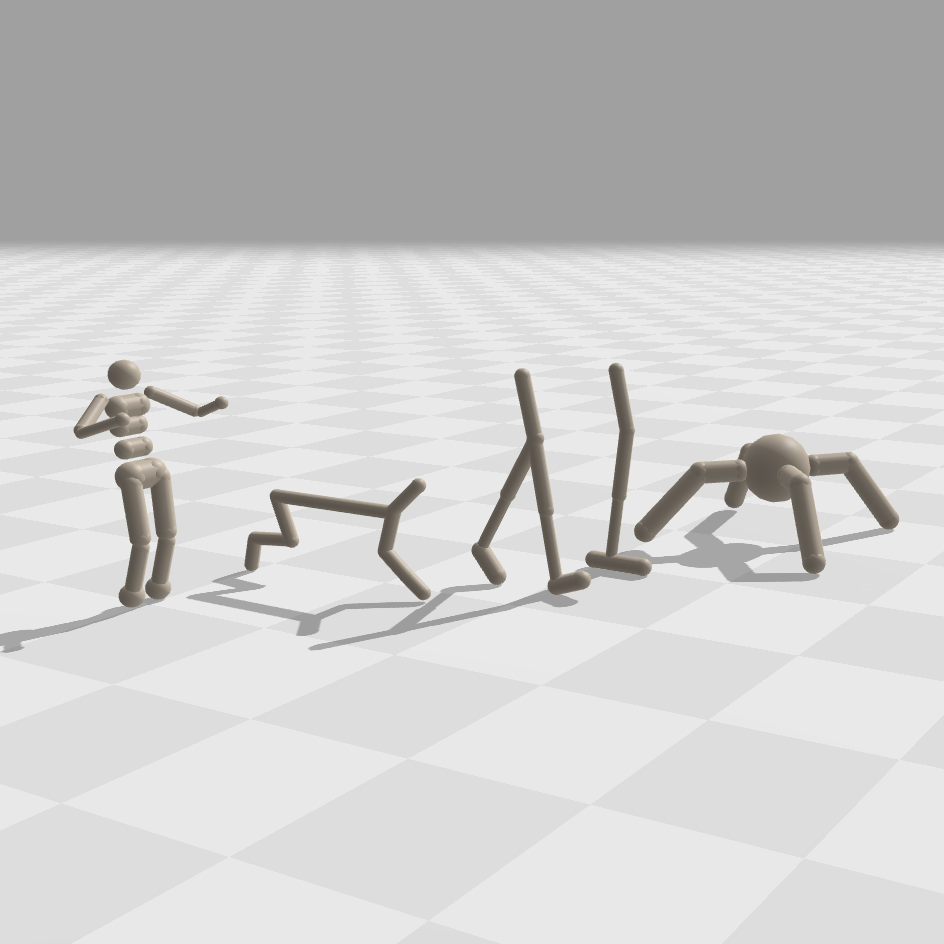

| 🦾 Multi-Agent Brax | Paper | Source | Continuous multi-agent robotic control based on Brax, analogous to Multi-Agent MuJoCo |

| 🎆 Hanabi | Paper | Source | Fully-cooperative partially-observable multiplayer card game |

| 👾 SMAX | Novel | Source | Simplified cooperative StarCraft micro-management environment |

| 🧮 STORM: Spatial-Temporal Representations of Matrix Games | Paper | Source | Matrix games represented as grid world scenarios |

| 🧭 JaxNav | Paper | Source | 2D geometric navigation for differential drive robots |

| 🪙 Coin Game | Paper | Source | Two-player grid world environment which emulates social dilemmas |

| 💡 Switch Riddle | Paper | Source | Simple cooperative communication game included for debugging |

We follow CleanRL's philosophy of providing single file implementations which can be found within the baselines directory. We use Hydra to manage our config files, with specifics explained in each algorithm's README. Most files include wandb logging code, this is disabled by default but can be enabled within the file's config.

| Algorithm | Reference | README |

|---|---|---|

| IPPO | Paper | Source |

| MAPPO | Paper | Source |

| IQL | Paper | Source |

| VDN | Paper | Source |

| QMIX | Paper | Source |

| TransfQMIX | Paper | Source |

| SHAQ | Paper | Source |

| PQN-VDN | Paper | Source |

Environments - Before installing, ensure you have the correct JAX installation for your hardware accelerator. We have tested up to JAX version 0.4.25. The JaxMARL environments can be installed directly from PyPi:

pip install jaxmarl

Algorithms - If you would like to also run the algorithms, install the source code as follows:

- Clone the repository:

git clone https://github.com/FLAIROx/JaxMARL.git && cd JaxMARL - Install requirements:

pip install -e .[algs] export PYTHONPATH=./JaxMARL:$PYTHONPATH - For the fastest start, we reccoment using our Dockerfile, the usage of which is outlined below.

Development - If you would like to run our test suite, install the additonal dependencies with:

pip install -e .[dev], after cloning the repository.

We take inspiration from the PettingZoo and Gymnax interfaces. You can try out training an agent in our Colab notebook. Further introduction scripts can be found here.

Actions, observations, rewards and done values are passed as dictionaries keyed by agent name, allowing for differing action and observation spaces. The done dictionary contains an additional "__all__" key, specifying whether the episode has ended. We follow a parallel structure, with each agent passing an action at each timestep. For asynchronous games, such as Hanabi, a dummy action is passed for agents not acting at a given timestep.

import jax

from jaxmarl import make

key = jax.random.PRNGKey(0)

key, key_reset, key_act, key_step = jax.random.split(key, 4)

# Initialise environment.

env = make('MPE_simple_world_comm_v3')

# Reset the environment.

obs, state = env.reset(key_reset)

# Sample random actions.

key_act = jax.random.split(key_act, env.num_agents)

actions = {agent: env.action_space(agent).sample(key_act[i]) for i, agent in enumerate(env.agents)}

# Perform the step transition.

obs, state, reward, done, infos = env.step(key_step, state, actions)To help get experiments up and running we include a Dockerfile and its corresponding Makefile. With Docker and the Nvidia Container Toolkit installed, the container can be built with:

make build

The built container can then be run:

make run

Please contribute! Please take a look at our contributing guide for how to add an environment/algorithm or submit a bug report. Our roadmap also lives there.

If you use JaxMARL in your work, please cite us as follows:@article{flair2023jaxmarl,

title={JaxMARL: Multi-Agent RL Environments in JAX},

author={Alexander Rutherford and Benjamin Ellis and Matteo Gallici and Jonathan Cook and Andrei Lupu and Gardar Ingvarsson and Timon Willi and Akbir Khan and Christian Schroeder de Witt and Alexandra Souly and Saptarashmi Bandyopadhyay and Mikayel Samvelyan and Minqi Jiang and Robert Tjarko Lange and Shimon Whiteson and Bruno Lacerda and Nick Hawes and Tim Rocktaschel and Chris Lu and Jakob Nicolaus Foerster},

journal={arXiv preprint arXiv:2311.10090},

year={2023}

}

There are a number of other libraries which inspired this work, we encourage you to take a look!

JAX-native algorithms:

- Mava: JAX implementations of IPPO and MAPPO, two popular MARL algorithms.

- PureJaxRL: JAX implementation of PPO, and demonstration of end-to-end JAX-based RL training.

- Minimax: JAX implementations of autocurricula baselines for RL.

- JaxIRL: JAX implementation of algorithms for inverse reinforcement learning.

JAX-native environments:

- Gymnax: Implementations of classic RL tasks including classic control, bsuite and MinAtar.

- Jumanji: A diverse set of environments ranging from simple games to NP-hard combinatorial problems.

- Pgx: JAX implementations of classic board games, such as Chess, Go and Shogi.

- Brax: A fully differentiable physics engine written in JAX, features continuous control tasks.

- XLand-MiniGrid: Meta-RL gridworld environments inspired by XLand and MiniGrid.

- Craftax: (Crafter + NetHack) in JAX.