This repository allows you to create and search a vector database for relevant context across a wide variety of documents and then get a response from the large language model that's more accurate. This is commonly referred to as "retrieval augmented generation" (RAG) and it drastically reduces hallucinations from the LLM! You can watch an introductory Video or read a Medium article about the program.

| Feature | Details |

|---|---|

| General text extraction | .pdf .docx .epub .txt .html .enex .eml .msg .csv .xls .xlsx .rtf .odt |

| "Vision" models to create image summaries | .png .jpg .jpeg .bmp .gif .tif .tiff |

| Transcribe audio files to text | .mp3 .wav .m4a .ogg .wma .flac and more... |

| Type or speak your query | Using a powerful WhisperS2T voice recorder |

| Get a response from an LLM | LM Studio Local Models Chat GPT (coming soon) |

| Text to speech playback of the LLM's response | Bark WhisperSpeech ChatTTS Google TTS |

CPU and Nvidia GPU support |

Looking for testers or contributors for AMD and Intel GPUs as well as Metal/MPS/MLX |

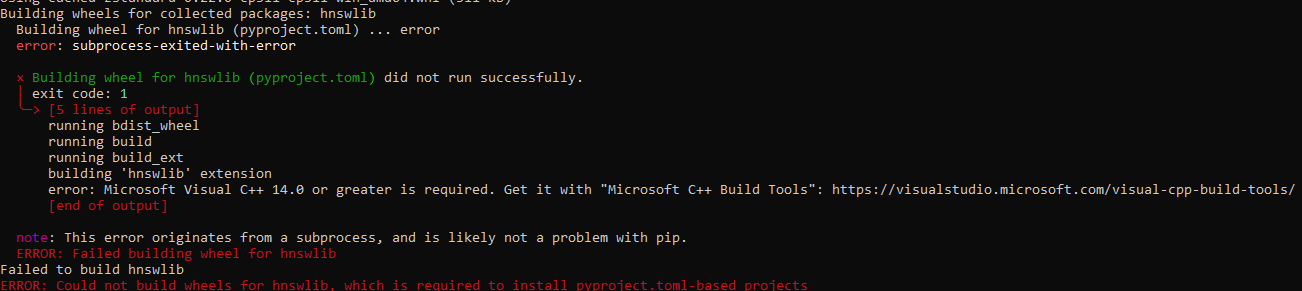

| 🐍 Python 3.11 • 📁 Git • 📁 Git LFS • 🌐 Pandoc • 🛠️ Compiler |

|---|

The above link downloads Visual Studio as an example. Make sure to install the required SDKs, however.

Download the latest "release," extract its contents, and open the "src" folder:

- NOTE: If you clone this repository you will get the development version, which may or may not be stable.

The last attempt to support 🐧 Linux and 🍎 MacOS is Release v3.5.2. Make sure and follow the

readme.mdinstructions there.

Within the src folder, create a virtual environment:

python -m venv .

Activate the virtual environment:

.\Scripts\activate

Run the setup script:

Only for

Windowsfor now.

python setup_windows.py

In order to use the Ask Jeeves functionality you must:

- Go into the

Assetsfolder; - Right click on

koboldcpp_nocuda.exe; - Check the "Unblock" checkbox

- Click OK.

If the "unblock" checkbox is not visible for whatever reason, another option is to doubleclick koboldcpp_nocuda.exe, select the .gguf file within the Assets directory, and start the program. This should (at least on Windows) attempt to start the Kobold program, which will trigger an option to "allow" it and/or create an exception to "Windows Defender" on your computer. Select "Allow" or whatever other message you receive, which will enable it for all future interactions. Please note that you should do this before trying to run the Ask Jeeves functionality in this program; otherwise, it may not work.

Submit a Github

Issueif you encounter any problems asAsk Jeevesis a relatively new feature.

🔥Important🔥 for more detailed instructions just Ask Jeeves!

Every time you want to use the program you must activate the virtual environment:

.\Scripts\activate

python gui.py

- Select and download a vector/embedding model from the

Models Tab.

This program extracts the text from a variety of file types and puts them into the vector database. It also allows you to create summarizes of images and transcriptions of audio files to be put into the database.

In the Create Database tab, select files you want to add to the database. You can click the Choose Files button as many times as you want.

This program uses "vision" models to create summaries of images, which can then be entered into the database and searched. Before inputting images, I highly recommend that you test the various vision models for the one you like the most.

To test a vision model:

- From the

Create Databasetab, select one or more images. - From the

Settingstab, select the vision model you want to test. - From the

Toolstab, process the images.

After determining which vision model you like, add images to the database by selecting them from the Create Database tab like any other file. When you eventually create the database they will be automatically processed.

Audio files can be transcribed and put into the database to be searched. Before transcribing a long audio file, I highly recommend testing the various Whisper models on a shorter audio file as well as experimenting with different batch settings. Your goal should be to use as large of a Whisper model as your GPU supports and then adjust the batch size to keep the VRAM usage within your available VRAM.

To test optimal settings:

- Within the

Toolstab, select a short audio file. - Select a

Whispermodel. - Process the audio file.

- Within the

Create Databasetab, doubleclick the transcription that was just created. - Skim the

page contentfield to get a sense of whether the transcription is accurate enough for your use-case or if you need to selecta more accurateWhispermodel.

Once you've obtained the optimal settings for your system, it's time to transcribe an audio file into the database:

- Within the

Create Databasetab, delete any transcriptions you don't want entered into the database. - Create new transcriptions you want entered (repeate for multiple files).

Batch processing is not yet available.

- Download a vector model from the

Modelstab. - Within the

Create Databasetab, create the database.

- The

Manage Databasetab allows you to view the contents of all databases that you've created and delete them if you want.

- In the

Query Databasetab, select the database you want to use from the pulldown menu. - Enter your question by typing it or using the

Record Questionbutton. - Check the

chunks onlycheckbox to only receive the relevant contexts. - Click

Submit Question.- In the

Settingstab, you can change multiple settings regarding querying the database. More information can be found in the User Guide.

- In the

This program gets relevant chunks from the vector database and forwarding them - along with your question - to LM Studio for an answer!

- Perform the above steps regarding entering a question and choosing settings, but make sure that

Chunks Onlyis 🔥UNCHECKED🔥. - Start LM Studio and go to the Server tab on the left.

- Load a model.

- Turn

Apply Prompt Formattingto "OFF." - On the right side within

Prompt Format, make sure that all of the following settings are blank:System Message PrefixSystem Message SuffixUser Message PrefixUser Message Suffix

- At the top, load a model within LM Studio.

- On the right, adjust the

GPU Offloadsetting to your liking. - Within my program, go to the

Settingstab, select the appropriate prompt format for the model loaded in LM Studio, clickUpdate Settings. - In LM Studio, click

Start Server. - In the

Query Databasetab, clickSubmit Question.

Feel free to report bugs or request enhancements by creating an issue on github or contacting me on the LM Studio Discord server (see below link)!

All suggestions (positive and negative) are welcome. "[email protected]" or feel free to message me on the LM Studio Discord Server.