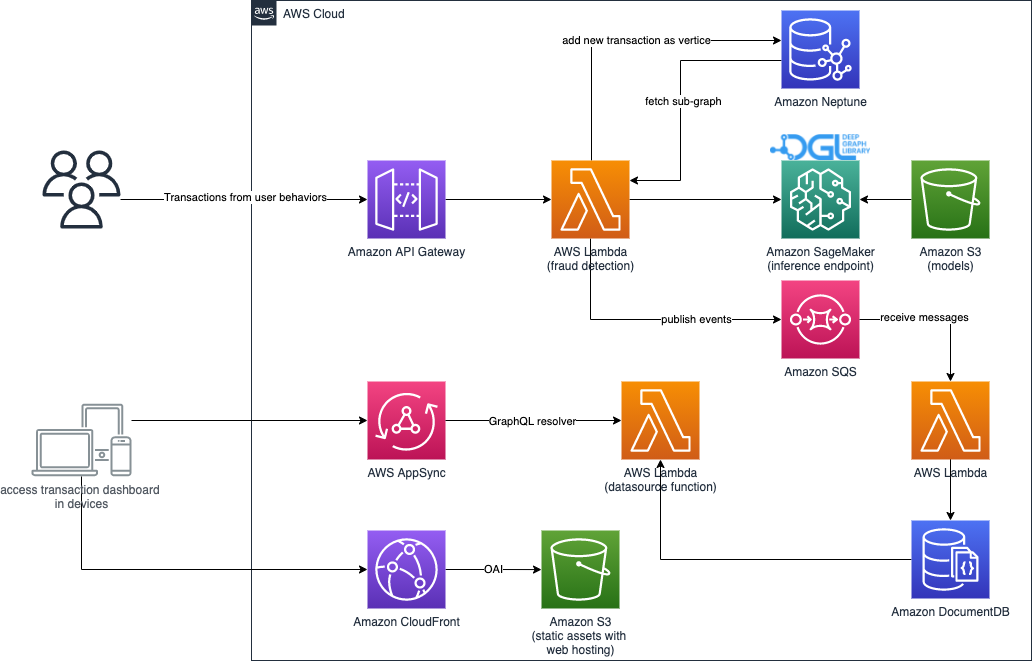

It's a end-to-end solution for real-time fraud detection using graph database Amazon Neptune, Amazon SageMaker and Deep Graph Library (DGL) to construct a heterogeneous graph from tabular data and train a Graph Neural Network(GNN) model to detect fraudulent transactions in the IEEE-CIS Fraud detection dataset.

This solution consists of below stacks,

- Fraud Detection solution stack

- nested model training and deployment stack

- nested real-time fraud detection stack

- nested transaction dashboard stack

The model training & deployment pipeline is orchestrated by AWS Step Functions like below graph,

It creates a React based web portal that observes the recent fraud transactions detected by this solution. This web application also is orchestrated by Amazon CloudFront, AWS Amplify, AWS AppSync, Amazon API Gateway, AWS Step Functions and Amazon DocumentDB.

After deploying this solution, go to AWS Step Functions in AWS console, then start the state machine starting with ModelTrainingPipeline.

You can input below parameters to overrride the default parameters of model training,

{

"trainingJob": {

"hyperparameters": {

"n-hidden": "64",

"n-epochs": "100",

"lr":"1e-3"

},

"instanceType": "ml.c5.9xlarge",

"timeoutInSeconds": 10800

}

}The solution is using graph database Amazon Neptune for real-time fraud detection and Amazon DocumentDB for dashboard. Due to the availability of those services, the solution supports to be deployed to below regions,

- US East (N. Virginia): us-east-1

- US East (Ohio): us-east-2

- US West (Oregon): us-west-2

- Canada (Central): ca-central-1

- South America (São Paulo): sa-east-1

- Europe (Ireland): eu-west-1

- Europe (London): eu-west-2

- Europe (Paris): eu-west-3

- Europe (Frankfurt): eu-central-1

- Asia Pacific (Tokyo): ap-northeast-1

- Asia Pacific (Seoul): ap-northeast-2

- Asia Pacific (Singapore): ap-southeast-1

- Asia Pacific (Sydney): ap-southeast-2

- Asia Pacific (Mumbai): ap-south-1

- China (Beijing): cn-north-1

- China (Ningxia): cn-northwest-1

| Region name | Region code | Launch |

|---|---|---|

| Global regions(switch to above region you want to deploy) | us-east-1(default) | Launch |

| AWS China(Beijing) Region | cn-north-1 | Launch |

| AWS China(Ningxia) Region | cn-northwest-1 | Launch |

See deployment guide for detail steps.

- An AWS account

- Configure credential of aws cli

- Install node.js LTS version, such as 12.x

- Install Docker Engine

- Install the dependencies of solution via executing command

yarn install && npx projen - Initialize the CDK toolkit stack into AWS environment(only for deploying via AWS CDK first time), run

yarn cdk-init - [Optional] Public hosted zone in Amazon Route 53

- Authenticate with below ECR repository in your AWS partition

aws ecr get-login-password --region us-east-1 | docker login --username AWS --password-stdin 763104351884.dkr.ecr.us-east-1.amazonaws.comRun below command if you are deployed to China regions

aws ecr get-login-password --region cn-northwest-1 | docker login --username AWS --password-stdin 727897471807.dkr.ecr.cn-northwest-1.amazonaws.com.cnThe deployment will create a new VPC acrossing two AZs at least and NAT gateways. Then the solution will be deployed into the newly created VPC.

yarn deployIf you want to deploy the solution to default VPC, use below command.

yarn deploy-to-default-vpcOr deploy an existing VPC by specifying the VPC Id,

npx cdk deploy -c vpcId=<your vpc id>NOTE: please make sure your existing VPC having both public subnets and private subnets with NAT gateway.

The solution will deploy Neptune cluster with instance class db.r5.xlarge and 1 read replica by default. You can override the instance class and replica count like below,

npx cdk deploy --parameters NeptuneInstaneType=db.r5.4xlarge -c NeptuneReplicaCount=2 Deploy it with using SageMaker Serverless Inference(experimental)

npx cdk deploy -c ServerlessInference=true -c ServerlessInferenceConcurrency=50 -c ServerlessInferenceMemorySizeInMB=2048If you want use custom domain to access the dashbaord of solution, you can use below options when deploying the solution. NOTE: you need already create a public hosted zone in Route 53, see Solution prerequisites for detail.

npx cdk deploy -c EnableDashboardCustomDomain=true --parameters DashboardDomain=<the custom domain> --parameters Route53HostedZoneId=<hosted zone id of your domain>Add below additional context parameters,

npx cdk deploy -c TargetPartition=aws-cnNOTE: deploying to China region also require below domain parameters, because the CloudFront distribution must be accessed via custom domain.

--parameters DashboardDomain=<the custom domain> --parameters Route53HostedZoneId=<hosted zone id of your domain>yarn testThere are Jupyter notebooks for data engineering/scientist playing with the data featuring, model training and deploying inference endpoint without deploying this solution.

In the scenario of fraud detection, fraudsters can work collaboratively as groups to hide their abnormal features but leave some traces of relations. Traditional machine leaning models utilize various features of samples. However, the relations among different samples are normally ignored, either because of no direct feature can represent these relations, or the unique values of a feature is too big to be encoded for models. For example, IP addresses and physical addresses can be a link of two accounts. But normally the unique values of these addresses are too big to be one-hot encoded. Many feature-based models, hence, fail to leverage these potential relations.

Graph Neural Network models, on the other hand, directly benefit from links built among different samples, once reconstruct some categorical features of a sample into different nodes in a graph structure. Via using message pass and aggregation mechanism, GNN-based models can not only utilize features of samples, but also capture the relations among samples. With the advantages of capture relations, Graph Neural Network is more capable of detecting collaborated fraud event compared to traditional models.

We use graph database to store the relationships between entities. The graph database provides the microseconds query performance to query the sub-graph of entities for real-time fraud detection inference.

This solution is based on Graph Neural Network and graph-structured data while the Amazon Fraud Detector use time-serial models and take advantage of Amazon’s own data on fraudsters.

In addition, this solution also serves as a reference architecture of graph analytics and real-time graph machine learning scenarios. Users can take this solution as a base and fit into their own environments.

This solution has a few additional components and features than the current Amazon Neptune ML, including but not limited:

- An end-to-end data process pipeline to show how a real-world data pipeline could be. This will help industrial customer to quickly hand on a total solution of graph neural network model-based system.

- Real-time online inference sub-system, while the Neptune ML supports offline batch inference mode.

- A demo GUI to show how the solution can solve real-world business problems, while the Neptune ML primarily uses graph database queries to show results.

While this solution gives an overall architecture of an end-to-end real-time inference solution, the Amazon Neptune ML has been optimized for scalability and system-level performance, e.g. query latency. Therefore, later on when the Amazon Neptune ML supports real-time inference, it could be integrated into this solution as the main training and inference sub-system for customers who requires better scalability and low latency.

The deployment might fail due to creating CloudWatch log group with error message like below,

Cannot enable logging. Policy document length breaking Cloudwatch Logs Constraints, either < 1 or > 5120 (Service: AmazonApiGatewayV2; Status Code: 400; Error Code: BadRequestException; Request ID: xxx-yyy-zzz; Proxy: null)

It's caused by CloudWatch Logs resource policies are limited to 5120 characters. The remediation is merging or removing useless policies then update the resource policy of CloudWatch logs to reduce the characters of policies.

See CONTRIBUTING for more information.

This project is licensed under the Apache-2.0 License.