This package provides a generic, simple and fast implementation of Deepmind's AlphaZero algorithm:

- The core algorithm is only 2,000 lines of pure, hackable Julia code.

- Generic interfaces make it easy to add support for new games or new learning frameworks.

- Being between one and two orders of magnitude faster than competing alternatives written in pure Python, this implementation can solve nontrivial games on a standard desktop computer with a GPU.

- The same agent can be trained on a cluster of machines as easily as on a single computer and without modifying a single line of code.

Beyond its much publicized success in attaining superhuman level at games such as Chess and Go, DeepMind's AlphaZero algorithm illustrates a more general methodology of combining learning and search to explore large combinatorial spaces effectively. We believe that this methodology can have exciting applications in many different research areas.

Because AlphaZero is resource-hungry, successful open-source implementations (such as Leela Zero) are written in low-level languages (such as C++) and optimized for highly distributed computing environments. This makes them hardly accessible for students, researchers and hackers.

The motivation for this project is to provide an implementation of AlphaZero that is simple enough to be widely accessible, while also being sufficiently powerful and fast to enable meaningful experiments on limited computing resources. We found the Julia language to be instrumental in achieving this goal.

To download AlphaZero.jl and start training a Connect Four agent, just run:

export GKSwstype=100 # To avoid an occasional GR bug

git clone https://github.com/jonathan-laurent/AlphaZero.jl.git

cd AlphaZero.jl

julia --project -e 'import Pkg; Pkg.instantiate()'

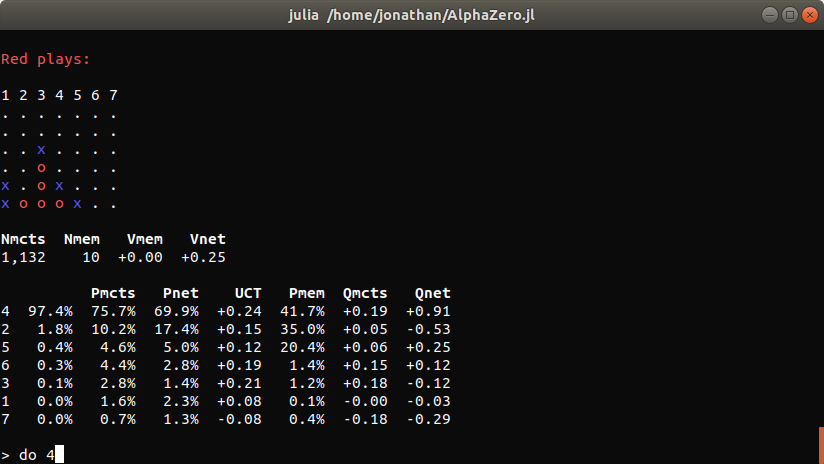

julia --project -e 'using AlphaZero; Scripts.train("connect-four")'Each training iteration takes about one hour on a desktop computer with an Intel Core i5 9600K processor and an 8GB Nvidia RTX 2070 GPU. We plot below the evolution of the win rate of our AlphaZero agent against two baselines (a vanilla MCTS baseline and a minmax agent that plans at depth 5 using a handcrafted heuristic):

Note that the AlphaZero agent is not exposed to the baselines during training and learns purely from self-play, without any form of supervision or prior knowledge.

We also evaluate the performances of the neural network alone against the same baselines. Instead of plugging it into MCTS, we play the action that is assigned the highest prior probability at each state:

Unsurprisingly, the network alone is initially unable to win a single game. However, it ends up significantly stronger than the minmax baseline despite not being able to perform any search.

For more information on training a Connect Four agent using AlphaZero.jl, see our full tutorial.

- JuliaCon 2021 Talk

- Documentation Home

- An Introduction to AlphaZero

- Package Overview

- Connect-Four Tutorial

- Solving Your Own Games

- Hyperparameters Documentation

- Jonathan Laurent: main developer

- Pavel Dimens: logo design

- Marek Kaluba: hyperparameters tuning for the grid-world example

- Michał Łukomski: Mancala example, OpenSpiel wrapper

- Johannes Fischer: documentation improvements

Contributions to AlphaZero.jl are most welcome. Many contribution ideas are available in our contribution guide. Please do not hesitate to open a Github issue to share any idea, feedback or suggestion.

If you want to support this project and help it gain visibility, please consider starring the repository. Doing well on such metrics may also help us secure academic funding in the future. Also, if you use this software as part of your research, we would appreciate that you include the following citation in your paper.

- AlphaGPU.jl: an AlphaZero implementation inspired from the "Scaling Scaling Laws with Board Games" paper, where almost everything happens on GPU (including the core MCTS logic). This implementation trades off some genericity and flexibility in exchange for unbeatable performances when used with small neural networks and environments that support batch-simulation on GPU.

- ReinforcementLearning.jl: a reinforcement learning framework that leverages Julia's multiple dispatch to offer highly composable environments, algorithms and components. Future releases of AlphaZero.jl may build on this framework, as it gains better support for multithreaded and distributed RL.

- POMDPs.jl: a fast, elegant and well-designed framework for working with partially observable Markov decisions processes.

This material is based upon work supported by the United States Air Force and DARPA under Contract No. FA9550-16-1-0288 and FA8750-18-C-0092. Any opinions, findings and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the United States Air Force and DARPA.