Please visit our website, https://infinigen.org

If you use Infinigen in your work, please cite our academic paper:

Alexander Raistrick*, Lahav Lipson*, Zeyu Ma* (*equal contribution, alphabetical order)

Lingjie Mei, Mingzhe Wang, Yiming Zuo, Karhan Kayan, Hongyu Wen, Beining Han,

Yihan Wang, Alejandro Newell, Hei Law, Ankit Goyal, Kaiyu Yang, Jia Deng

Conference on Computer Vision and Pattern Recognition (CVPR) 2023

@inproceedings{infinigen2023infinite,

title={Infinite Photorealistic Worlds Using Procedural Generation},

author={Raistrick, Alexander and Lipson, Lahav and Ma, Zeyu and Mei, Lingjie and Wang, Mingzhe and Zuo, Yiming and Kayan, Karhan and Wen, Hongyu and Han, Beining and Wang, Yihan and Newell, Alejandro and Law, Hei and Goyal, Ankit and Yang, Kaiyu and Deng, Jia},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={12630--12641},

year={2023}

}

Installation is tested and working on the following platforms:

- Ubuntu 22.04.2 LTS

- GPUs options tested: CPU only, GTX-1080, RTX-2080, RTX-3090, RTX-4090 (Driver 525, CUDA 12.0)

- RAM: 16GB

- MacOS Monterey & Ventura, Apple M1 Pro, 16GB RAM

We are working on support for rendering with AMD GPUs. Windows users should use WSL2. More instructions coming soon.

⚠️ Errors with git pull / merge conflicts when migrating from v1.0.0 to v1.0.1

To properly display open-source line by line git credits for our team, we have switched to a new version of the repo which does not share commit history with the the version available from 6/17/2023 to 6/29/2023 date. We hope this will help open source contributors identify the current "code owner" or person best equipped to support you with issues you encounter with any particular lines of the codebase.

You will not be able to pull or merge infinigen v1.0.1 into a v1.0.0 repo without significant git expertise. If you have no ongoing changes, we recommend you clone a new copy of the repo. We apologize for any inconvenience, please make an issue if you have problems updating or need help migrating ongoing changes. We understand this change is disruptive, but it is one-time-only and will not occur in future versions. Now it is complete, we intend to iterate rapidly in the coming weeks, please see our roadmap and twitter for updates.

Run these commands to get started

git clone --recursive https://github.com/princeton-vl/infinigen.git

cd infinigen

conda create --name infinigen python=3.10

conda activate infinigen

bash install.sh

install.sh may take significant time to download Blender3.3 and compile all source files.

Ignore non-fatal warnings. See Getting Help for guidelines on posting github issues

Run the following or add it to your ~/.bashrc (Linux/WSL) or ~/.bash_profile (Mac)

# on Linux/WSL

export BLENDER="/PATH/TO/infinigen/blender/blender"

# on MAC

export BLENDER="/PATH/TO/infinigen/Blender.app/Contents/MacOS/Blender"

(Optional) Running Infinigen in a Docker Container

Docker on Linux

In /infinigen/

make docker-build

make docker-setup

make docker-run

To enable CUDA compilation, use make docker-build-cuda instead of make docker-build

To run without GPU passthrough use make docker-run-no-gpu

To run without OpenGL ground truth use docker-run-no-opengl

To run without either, use docker-run-no-gpu-opengl

Note: make docker-setup can be skipped if not using OpenGL.

Use exit to exit the container and docker exec -it infinigen bash to re-enter the container as needed. Remember to conda activate infinigen before running scenes.

Docker on Windows

Install WSL2 and Docker Desktop, with "Use the WSL 2 based engine..." enabled in settings. Keep the Docker Desktop application open while running containers. Then follow instructions as above.

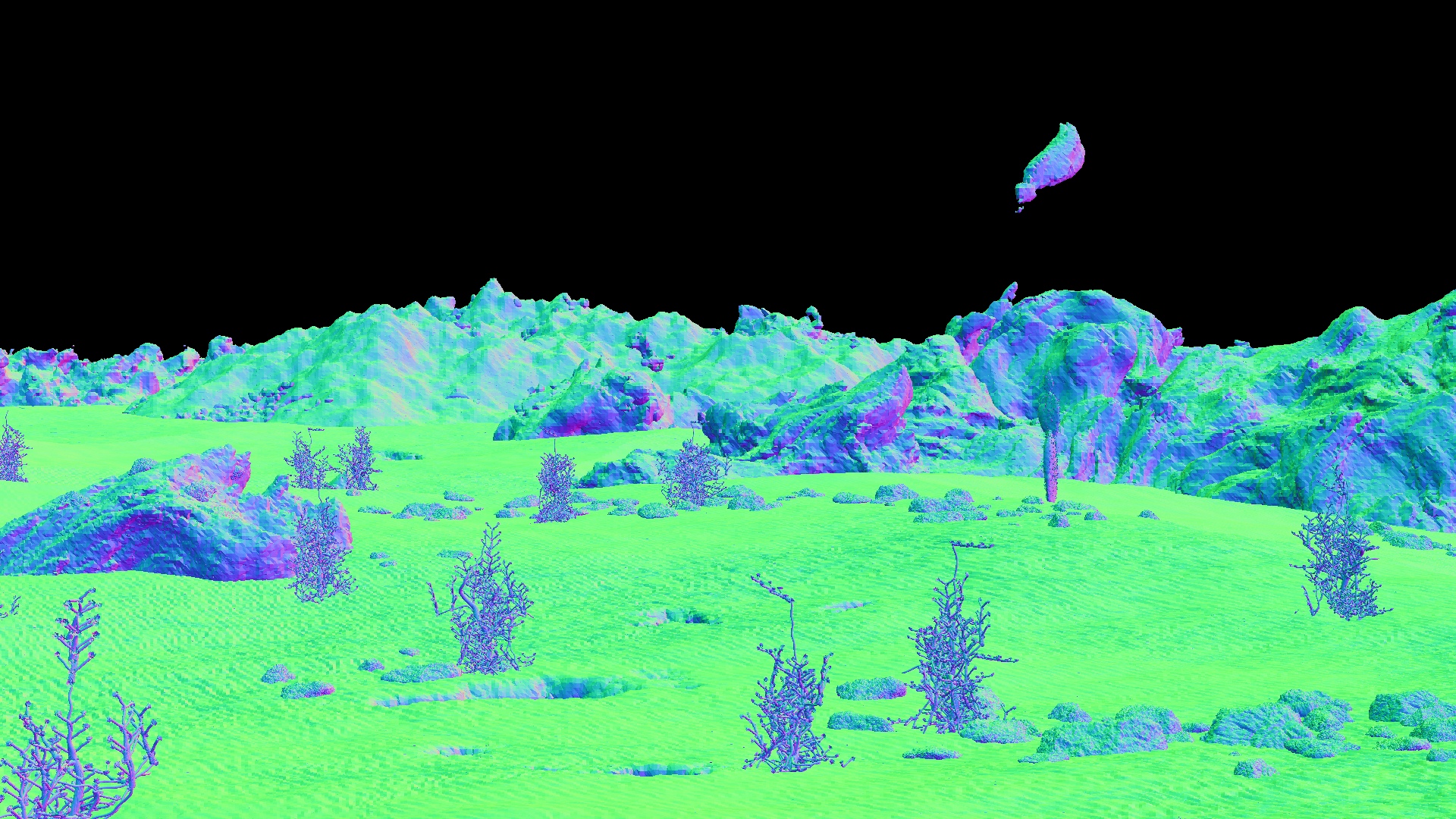

This guide will show you how to generate an image and it's corresponding ground-truth, similar to those shown above.

Infinigen generates scenes by running multiple tasks (usually executed automatically, like in Generate image(s) in one command). Here we will run them one by one to demonstrate. These commands take approximately 10 minutes and 16GB of memory to execute on an M1 Mac or Linux Desktop.

cd worldgen

mkdir outputs

# Generate a scene layout

$BLENDER -noaudio --background --python generate.py -- --seed 0 --task coarse -g desert simple --output_folder outputs/helloworld/coarse

# Populate unique assets

$BLENDER -noaudio --background --python generate.py -- --seed 0 --task populate fine_terrain -g desert simple --input_folder outputs/helloworld/coarse --output_folder outputs/helloworld/fine

# Render RGB images

$BLENDER -noaudio --background --python generate.py -- --seed 0 --task render -g desert simple --input_folder outputs/helloworld/fine --output_folder outputs/helloworld/frames

# Render again for accurate ground-truth

$BLENDER -noaudio --background --python generate.py -- --seed 0 --task render -g desert simple --input_folder outputs/helloworld/fine --output_folder outputs/helloworld/frames -p render.render_image_func=@flat/render_image

Output logs should indicate what the code is working on. Use --debug for even more detail. After each command completes you can inspect it's --output_folder for results, including running $BLENDER outputs/helloworld/coarse/scene.blend or similar to view blender files. We hide many meshes by default for viewport stability; to view them, click "Render" or use the UI to unhide them.

We also provide a (optional) separate pipeline for extracting the full set of annotations from each image or scene. Refer to GroundTruthAnnotations.md for compilation instructions, data format specifications and an extended "Hello World".

We provide tools/manage_datagen_jobs.py, a utility which runs these or similar steps automatically.

python -m tools.manage_datagen_jobs --output_folder outputs/hello_world --num_scenes 1 --specific_seed 0

--configs desert simple --pipeline_configs local_16GB monocular blender_gt --pipeline_overrides LocalScheduleHandler.use_gpu=False

Ready to remove the guardrails? Try the following:

- Swap

desertfor any ofconfig/scene_typesto get different biome (or write your own crazy config!). You can also add in the name of any file inconfigs. - Change the

--specific_seedto any number to produce different scenes, or remove it and set --num_scenes 50 to try many random seeds. - Remove

simpleto generate more detailed (but EXPENSIVE) scenes, as shown in the trailer. - Read and customize

generate.pyto understand how infinigen works under the hood. - Append

-p compose_scene.grass_chance=1.0to the first command to force grass (or any ofgenerate.py's'run_stage' asset names) to appear in the scene. You can modify the kwargs @gin.configurable() python function in the entire repo via this mechanism.

--configs enables you to customize the random distribution of visual content. If you do not select any config in the folder config/scene_types, the code choose one for you at random.

--pipeline_configs determines what compute resources will be used, and what render jobs are necessary for each scene. A list of configs are available in tools/pipeline_configs. You must pick one config to determine compute type (ie local_64GB or slurm) and one to determine the dataset type (such as monocular or monocular_video). Run python -m tools.manage_datagen_jobs --help for more options related to dataset generation.

If you intend to use CUDA-accelerated terrain (--pipeline_configs cuda_terrain), you must run install.sh on a CUDA-enabled machine.

Infinigen uses Google's "Gin Config" heavily, and we encourage you to consult their documentation to familiarize yourself with its capabilities.

💡 Generating high quality videos / Avoiding terrain aliasing

To render high quality videos as shown in the intro video, we ran commands similar to the following, on our SLURM cluster.

python -m tools.manage_datagen_jobs --output_folder outputs/my_videos --num_scenes 500 \

--pipeline_config slurm monocular_video cuda_terrain opengl_gt \

--cleanup big_files --warmup_sec 60000 --config trailer high_quality_terrain

Our terrain system resolves its signed distance function (SDF) to view-specific meshes, which must be updated as the camera moves. For video rendering, we strongly recommend using the high_quality_terrain config to avoid perceptible flickering and temporal aliasing. This config meshes the SDF at very high detail, to create seamless video. However, it has high compute costs, so we recommend also using --pipeline_config cuda_terrain on a machine with an NVIDIA GPU. For applications with fast moving cameras, you may need to update the terrain mesh more frequently by decreasing iterate_scene_tasks.view_block_size = 16 in worldgen/tools/pipeline_configs/monocular_video.gin

As always, you may attempt to switch the compute platform (e.g from slurm to local_256GB) or the data format (e.g. from monocular_video to stereo_video).

❗ Infinigen provides a ground-truth system for generating diverse automatic annotations for computer vision. See the docs here.

Infinigen has evolved significantly since the version described in our CVPR paper. It now features some procedural code obtained from the internet under CC-0 licenses, which are marked with code comments where applicable - no such code was present in the system for the CVPR version.

Infinigen is an ongoing research project, and has some known issues. Through experimenting with Infinigen's code and config files, you will find scenes which crash or cannot be handled on your hardware. Infinigen scenes are randomized, with a long tail of possible scene complexity and thus compute requirements. If you encounter a scene that does not fit your computing hardware, you should try other seeds, use other config files, or follow up for help.

Please see our project roadmap and follow us at https://twitter.com/PrincetonVL for updates.

We welcome contributions! You can contribute in many ways:

- Contribute code to this repository - We welcome code contributions. More guidelines coming soon.

- Contribute procedural generators -

worldgen/nodes/node_transpiler/dev_script.pyprovides tools to convert artist-friendly Blender Nodes into python code. Tutorials and guidelines coming soon. - Contribute pre-generated data - Anyone can contribute their computing power to create data and share it with the community. Please stay tuned for a repository of pre-generated data.

Please post this repository's Github Issues page for help. Please run your command with --debug, and let us know:

- What is your computing setup, including OS version, CPU, RAM, GPU(s) and any drivers?

- What version of the code are you using (link a commit hash), and what if any modifications have you made (new configs, code edits)

- What exact command did you run?

- What were the output logs of the command you ran?

- If using

manage_datagen_jobs, look inoutputs/MYJOB/MYSEED/logs/to find the right one. - What was the exact python error and stacktrace, if applicable?

- If using

Infinigen wouldn't be possible without the fantastic work of the Blender Foundation and it's open-source contributors. Infinigen uses many open source projects, with special thanks to Land-Lab, BlenderProc and Blender-Differential-Growth.

We thank Thomas Kole for providing procedural clouds (which are more photorealistic than our original version) and Pedro P. Lopes for the autoexposure nodegraph.

We learned tremendously from online tutorials of Andrew Price, Artisans of Vaul, Bad Normals, Blender Tutorial Channel, blenderbitesize, Blendini, Bradley Animation, CGCookie, CGRogue, Creative Shrimp, CrowdRender, Dr. Blender, HEY Pictures, Ian Hubert, Kev Binge, Lance Phan, MaxEdge, Mr. Cheebs, PixelicaCG, Polyfjord, Robbie Tilton, Ryan King Art, Sam Bowman and yogigraphics. These tutorials provided procedural generators for our early experimentation and served as inspiration for our own implementations in the official release of Infinigen. They are acknowledged in file header comments where applicable.