🤗 HF Repo • 🐦 Twitter • 📃 [WizardLM] @ICLR2024 • 📃 [WizardCoder] @ICLR2024 • 📃 [WizardMath]

👋 Join our Discord

Unofficial Video Introductions

Thanks to the enthusiastic friends, their video introductions are more lively and interesting.

- NEW WizardLM 70b 🔥 Giant Model...Insane Performance

- GET WizardLM NOW! 7B LLM KING That Can Beat ChatGPT! I'm IMPRESSED!

- WizardLM: Enhancing Large Language Models to Follow Complex Instructions

- WizardCoder AI Is The NEW ChatGPT's Coding TWIN!

- 🔥🔥🔥[2024/01/04] We released WizardCoder-33B-V1.1 trained from deepseek-coder-33b-base, the SOTA OSS Code LLM on EvalPlus Leaderboard, achieves 79.9 pass@1 on HumanEval, 73.2 pass@1 on HumanEval-Plus, 78.9 pass@1 on MBPP, and 66.9 pass@1 on MBPP-Plus. WizardCoder-33B-V1.1 outperforms ChatGPT 3.5, Gemini Pro, and DeepSeek-Coder-33B-instruct on HumanEval and HumanEval-Plus pass@1. WizardCoder-33B-V1.1 is comparable with ChatGPT 3.5, and surpasses Gemini Pro on MBPP and MBPP-Plus pass@1.

- [2023/08/26] We released WizardCoder-Python-34B-V1.0 , which achieves the 73.2 pass@1 and surpasses GPT4 (2023/03/15), ChatGPT-3.5, and Claude2 on the HumanEval Benchmarks. For more details, please refer to WizardCoder.

- [2023/06/16] We released WizardCoder-15B-V1.0 , which surpasses Claude-Plus (+6.8), Bard (+15.3) and InstructCodeT5+ (+22.3) on the HumanEval Benchmarks. For more details, please refer to WizardCoder.

| Model | Checkpoint | Paper | HumanEval | HumanEval+ | MBPP | MBPP+ |

|---|---|---|---|---|---|---|

| GPT-4-Turbo (Nov 2023) | - | - | 85.4 | 81.7 | 83.0 | 70.7 |

| GPT-4 (May 2023) | - | - | 88.4 | 76.8 | - | - |

| GPT-3.5-Turbo (Nov 2023) | - | - | 72.6 | 65.9 | 81.7 | 69.4 |

| Gemini Pro | - | - | 63.4 | 55.5 | 72.9 | 57.9 |

| DeepSeek-Coder-33B-instruct | - | - | 78.7 | 72.6 | 78.7 | 66.7 |

| WizardCoder-33B-V1.1 | 🤗 HF Link | 📃 [WizardCoder] | 79.9 | 73.2 | 78.9 | 66.9 |

| WizardCoder-Python-34B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 73.2 | 64.6 | 73.2 | 59.9 |

| WizardCoder-15B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 59.8 | 52.4 | -- | -- |

| WizardCoder-Python-13B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 64.0 | -- | -- | -- |

| WizardCoder-Python-7B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 55.5 | -- | -- | -- |

| WizardCoder-3B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 34.8 | -- | -- | -- |

| WizardCoder-1B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 23.8 | -- | -- | -- |

-

[12/19/2023] 🔥 We released WizardMath-7B-V1.1 trained from Mistral-7B, the SOTA 7B math LLM, achieves 83.2 pass@1 on GSM8k, and 33.0 pass@1 on MATH.

-

[12/19/2023] 🔥 WizardMath-7B-V1.1 outperforms ChatGPT 3.5, Gemini Pro, Mixtral MOE, and Claude Instant on GSM8K pass@1.

-

[12/19/2023] 🔥 WizardMath-7B-V1.1 is comparable with ChatGPT 3.5, Gemini Pro, and surpasses Mixtral MOE on MATH pass@1.

-

🔥 Our WizardMath-70B-V1.0 model slightly outperforms some closed-source LLMs on the GSM8K, including ChatGPT 3.5, Claude Instant 1 and PaLM 2 540B.

-

🔥 Our WizardMath-70B-V1.0 model achieves 81.6 pass@1 on the GSM8k Benchmarks, which is 24.8 points higher than the SOTA open-source LLM.

-

🔥 Our WizardMath-70B-V1.0 model achieves 22.7 pass@1 on the MATH Benchmarks, which is 9.2 points higher than the SOTA open-source LLM.

| Model | Checkpoint | Paper | GSM8k | MATH |

|---|---|---|---|---|

| WizardMath-7B-V1.1 | 🤗 HF Link | 📃 [WizardMath] | 83.2 | 33.0 |

| WizardMath-70B-V1.0 | 🤗 HF Link | 📃 [WizardMath] | 81.6 | 22.7 |

| WizardMath-13B-V1.0 | 🤗 HF Link | 📃 [WizardMath] | 63.9 | 14.0 |

| WizardMath-7B-V1.0 | 🤗 HF Link | 📃 [WizardMath] | 54.9 | 10.7 |

- [08/09/2023] We released WizardLM-70B-V1.0 model. Here is Full Model Weight.

| Model | Checkpoint | Paper | MT-Bench | AlpacaEval | GSM8k | HumanEval | Demo | License |

|---|---|---|---|---|---|---|---|---|

| WizardLM-70B-V1.0 | 🤗 HF Link | 📃Coming Soon | 7.78 | 92.91% | 77.6% | 50.6 | Llama 2 License | |

| WizardLM-13B-V1.2 | 🤗 HF Link | 7.06 | 89.17% | 55.3% | 36.6 | Demo | Llama 2 License | |

| WizardLM-13B-V1.1 | 🤗 HF Link | 6.76 | 86.32% | 25.0 | Non-commercial | |||

| WizardLM-30B-V1.0 | 🤗 HF Link | 7.01 | 37.8 | Non-commercial | ||||

| WizardLM-13B-V1.0 | 🤗 HF Link | 6.35 | 75.31% | 24.0 | Non-commercial | |||

| WizardLM-7B-V1.0 | 🤗 HF Link | 📃 [WizardLM] | 19.1 | Non-commercial |

Please cite the paper if you use the data or code from WizardLM.

@inproceedings{

xu2024wizardlm,

title={Wizard{LM}: Empowering Large Pre-Trained Language Models to Follow Complex Instructions},

author={Can Xu and Qingfeng Sun and Kai Zheng and Xiubo Geng and Pu Zhao and Jiazhan Feng and Chongyang Tao and Qingwei Lin and Daxin Jiang},

booktitle={The Twelfth International Conference on Learning Representations},

year={2024},

url={https://openreview.net/forum?id=CfXh93NDgH}

}

Please cite the paper if you use the data or code from WizardCoder.

@inproceedings{

luo2024wizardcoder,

title={WizardCoder: Empowering Code Large Language Models with Evol-Instruct},

author={Ziyang Luo and Can Xu and Pu Zhao and Qingfeng Sun and Xiubo Geng and Wenxiang Hu and Chongyang Tao and Jing Ma and Qingwei Lin and Daxin Jiang},

booktitle={The Twelfth International Conference on Learning Representations},

year={2024},

url={https://openreview.net/forum?id=UnUwSIgK5W}

}

Please cite the paper if you refer to our model or code or data or paper from WizardMath.

@article{luo2023wizardmath,

title={WizardMath: Empowering Mathematical Reasoning for Large Language Models via Reinforced Evol-Instruct},

author={Luo, Haipeng and Sun, Qingfeng and Xu, Can and Zhao, Pu and Lou, Jianguang and Tao, Chongyang and Geng, Xiubo and Lin, Qingwei and Chen, Shifeng and Zhang, Dongmei},

journal={arXiv preprint arXiv:2308.09583},

year={2023}

}

❗To commen concern about dataset:

Recently, there have been clear changes in the open-source policy and regulations of our overall organization's code, data, and models. Despite this, we have still worked hard to obtain opening the weights of the model first, but the data involves stricter auditing and is in review with our legal team . Our researchers have no authority to publicly release them without authorization. Thank you for your understanding.

- 📣 We are looking for highly motivated students to join us as interns to create more intelligent AI together. Please contact [email protected]

Note for model system prompts usage:

To obtain results identical to our demo, please strictly follow the prompts and invocation methods provided in the "src/infer_wizardlm13b.py" to use our model for inference. Our model adopts the prompt format from Vicuna and supports multi-turn conversation.

For WizardLM, the Prompt should be as following:

A chat between a curious user and an artificial intelligence assistant. The assistant gives helpful, detailed, and polite answers to the user's questions. USER: Hi ASSISTANT: Hello.</s>USER: Who are you? ASSISTANT: I am WizardLM.</s>......

For WizardCoder , the Prompt should be as following:

"Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n### Instruction:\n{instruction}\n\n### Response:"

For WizardMath, the Prompts should be as following:

Default version:

"Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n### Instruction:\n{instruction}\n\n### Response:"

CoT Version: (❗For the simple math questions, we do NOT recommend to use the CoT prompt.)

"Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n### Instruction:\n{instruction}\n\n### Response: Let's think step by step."

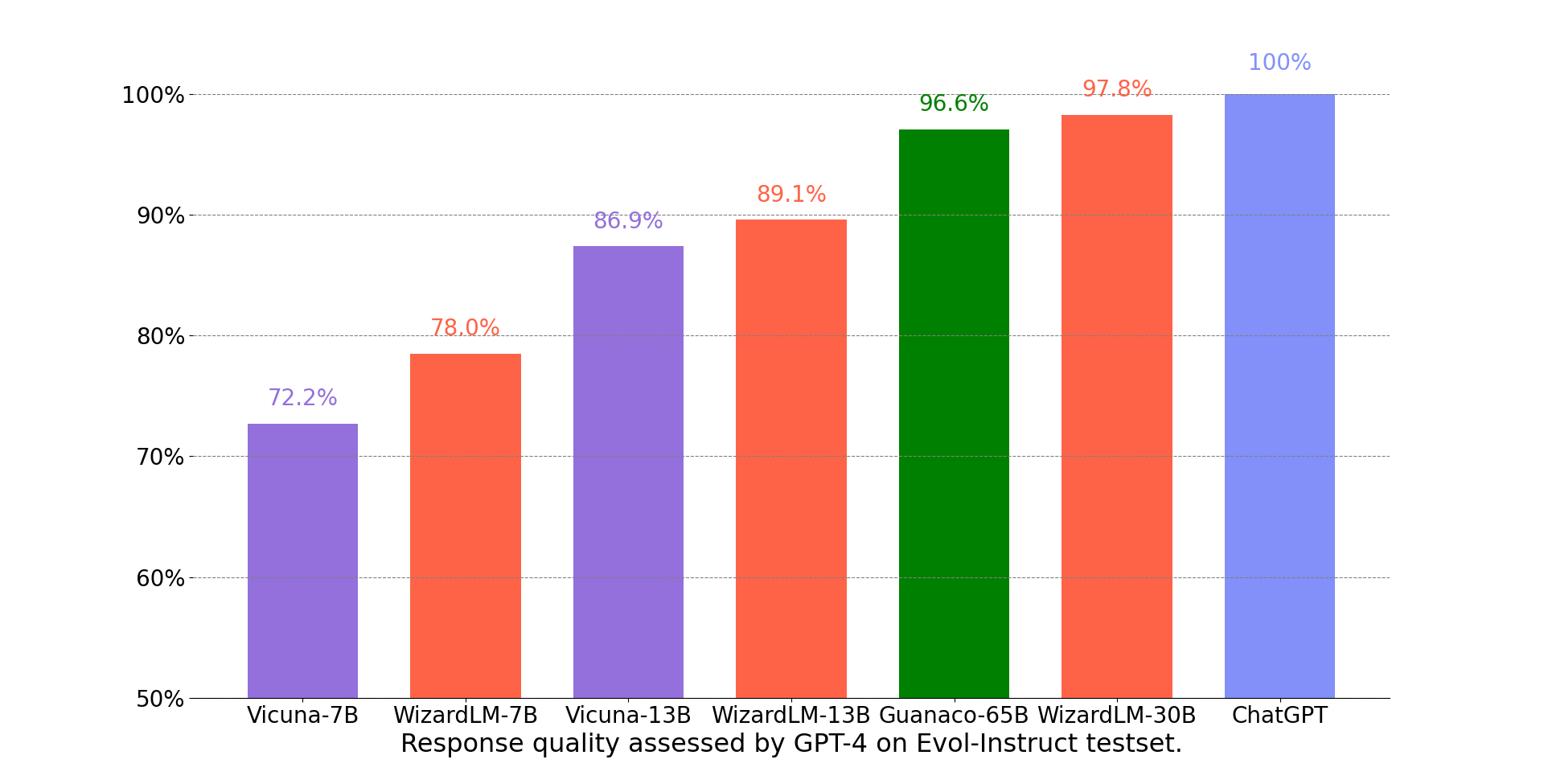

We adopt the automatic evaluation framework based on GPT-4 proposed by FastChat to assess the performance of chatbot models. As shown in the following figure, WizardLM-30B achieved better results than Guanaco-65B.

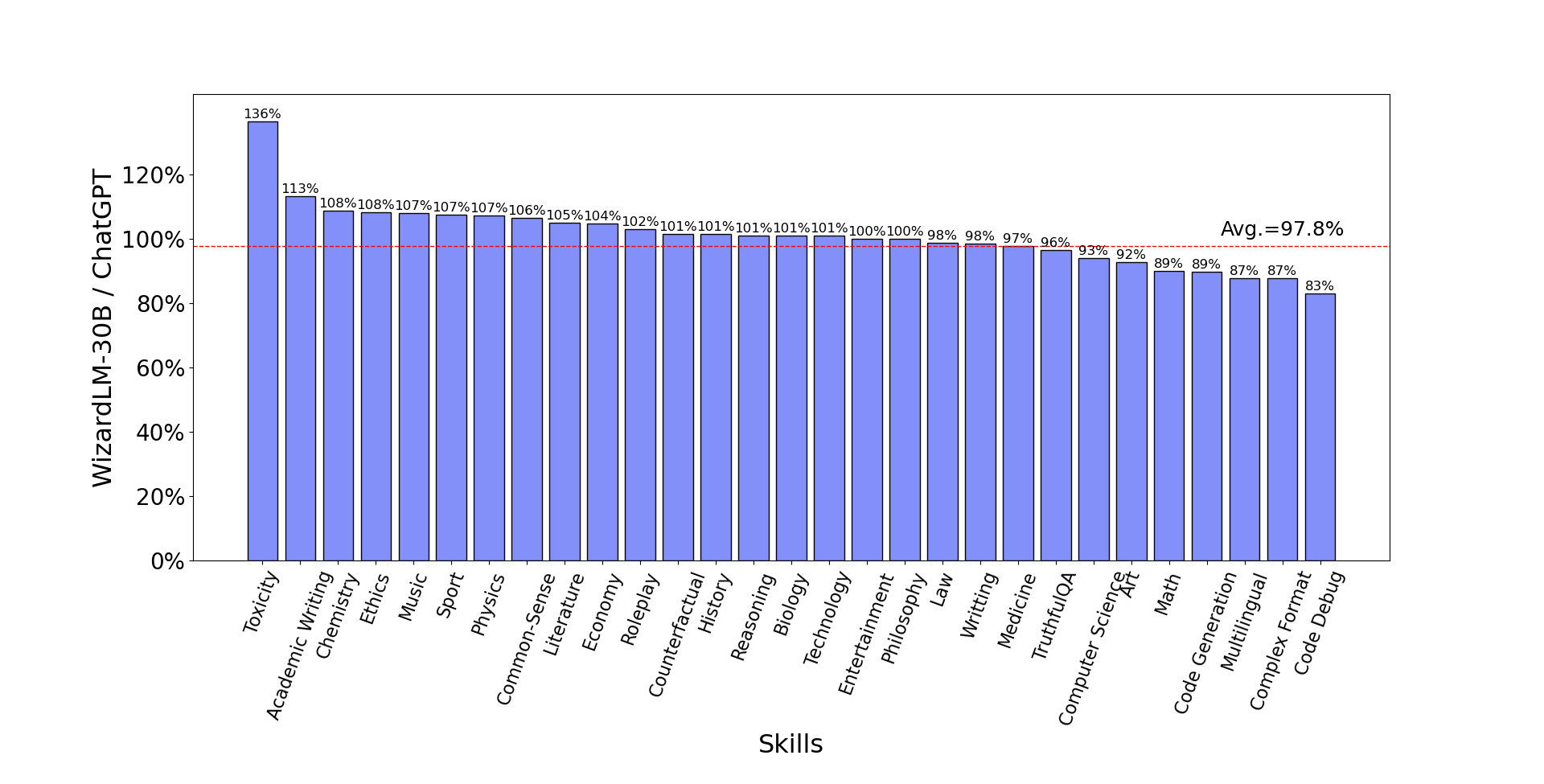

The following figure compares WizardLM-30B and ChatGPT’s skill on Evol-Instruct testset. The result indicates that WizardLM-30B achieves 97.8% of ChatGPT’s performance on average, with almost 100% (or more than) capacity on 18 skills, and more than 90% capacity on 24 skills.

The following table provides a comparison of WizardLMs and other LLMs on NLP foundation tasks. The results indicate that WizardLMs consistently exhibit superior performance in comparison to the LLaMa models of the same size. Furthermore, our WizardLM-30B model showcases comparable performance to OpenAI's Text-davinci-003 on the MMLU and HellaSwag benchmarks.

| Model | MMLU 5-shot | ARC 25-shot | TruthfulQA 0-shot | HellaSwag 10-shot | Average |

|---|---|---|---|---|---|

| Text-davinci-003 | 56.9 | 85.2 | 59.3 | 82.2 | 70.9 |

| Vicuna-13b 1.1 | 51.3 | 53.0 | 51.8 | 80.1 | 59.1 |

| Guanaco 30B | 57.6 | 63.7 | 50.7 | 85.1 | 64.3 |

| WizardLM-7B 1.0 | 42.7 | 51.6 | 44.7 | 77.7 | 54.2 |

| WizardLM-13B 1.0 | 52.3 | 57.2 | 50.5 | 81.0 | 60.2 |

| WizardLM-30B 1.0 | 58.8 | 62.5 | 52.4 | 83.3 | 64.2 |

The following table provides a comprehensive comparison of WizardLMs and several other LLMs on the code generation task, namely HumanEval. The evaluation metric is pass@1. The results indicate that WizardLMs consistently exhibit superior performance in comparison to the LLaMa models of the same size. Furthermore, our WizardLM-30B model surpasses StarCoder and OpenAI's code-cushman-001. Moreover, our Code LLM, WizardCoder, demonstrates exceptional performance, achieving a pass@1 score of 57.3, surpassing the open-source SOTA by approximately 20 points.

| Model | HumanEval Pass@1 |

|---|---|

| LLaMA-7B | 10.5 |

| LLaMA-13B | 15.8 |

| CodeGen-16B-Multi | 18.3 |

| CodeGeeX | 22.9 |

| LLaMA-33B | 21.7 |

| LLaMA-65B | 23.7 |

| PaLM-540B | 26.2 |

| CodeGen-16B-Mono | 29.3 |

| code-cushman-001 | 33.5 |

| StarCoder | 33.6 |

| WizardLM-7B 1.0 | 19.1 |

| WizardLM-13B 1.0 | 24.0 |

| WizardLM-30B 1.0 | 37.8 |

| WizardCoder-15B 1.0 | 57.3 |

We welcome everyone to use your professional and difficult instructions to evaluate WizardLM, and show us examples of poor performance and your suggestions in the issue discussion area. We are focusing on improving the Evol-Instruct now and hope to relieve existing weaknesses and issues in the the next version of WizardLM. After that, we will open the code and pipeline of up-to-date Evol-Instruct algorithm and work with you together to improve it.

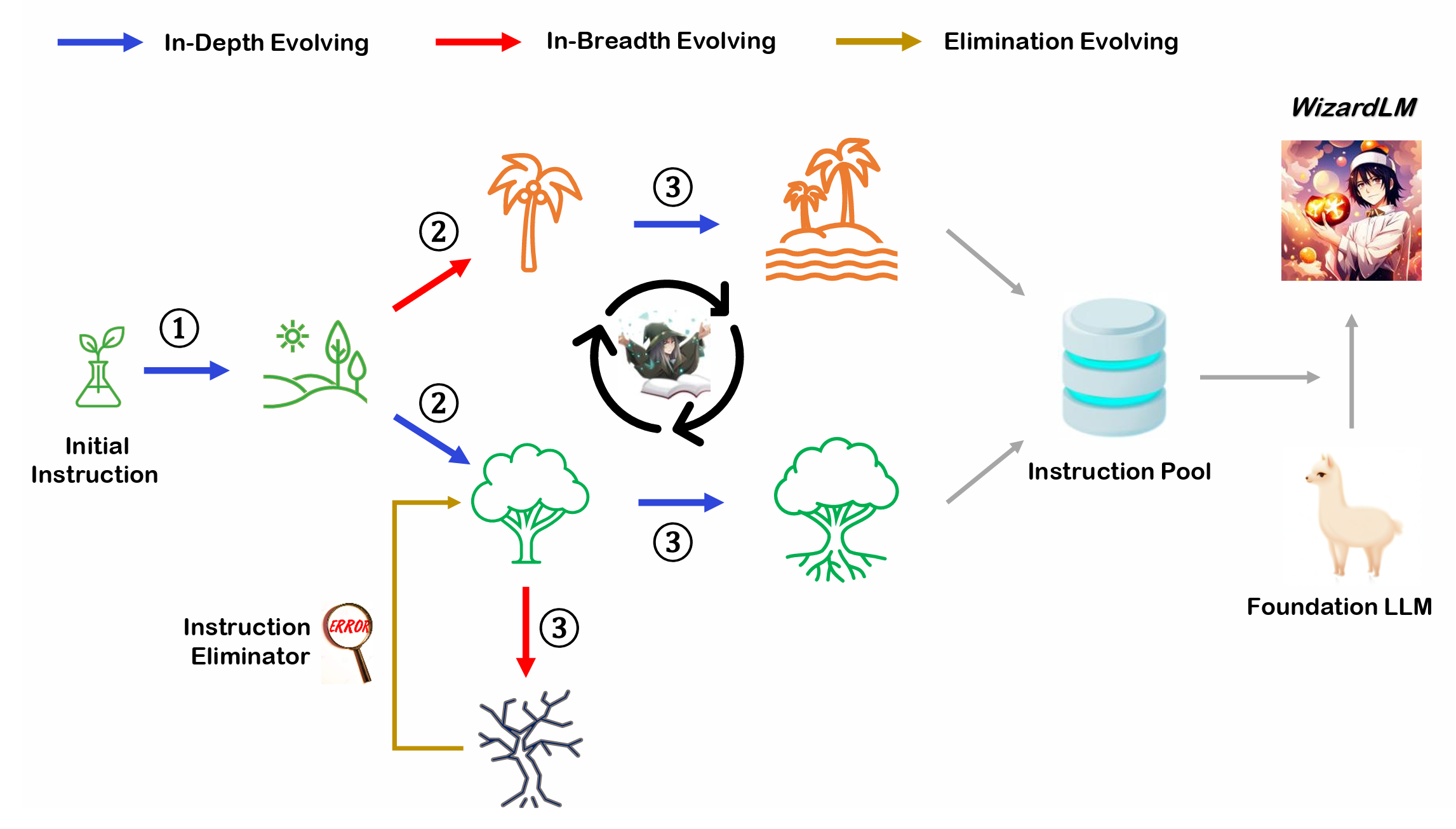

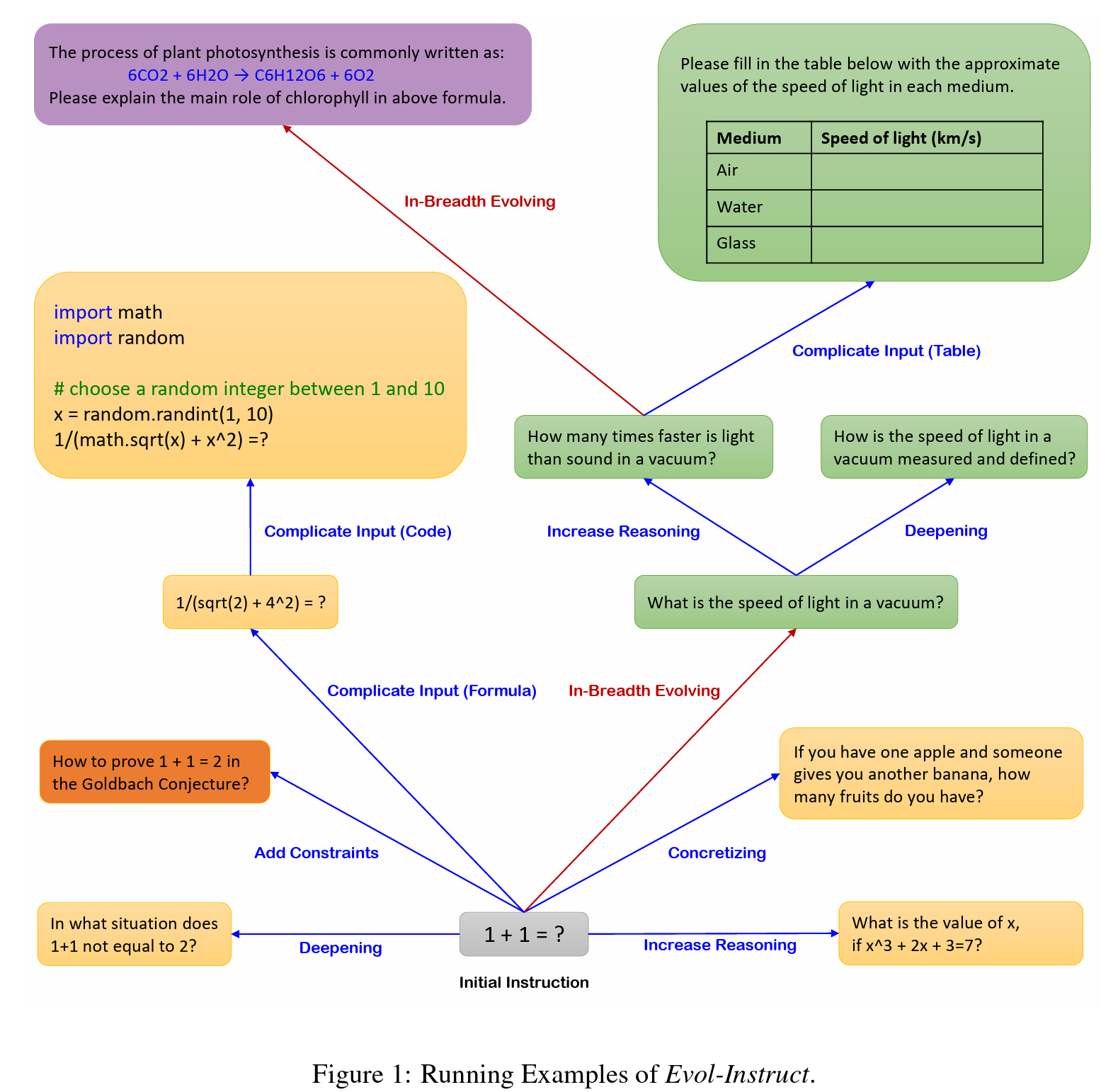

Evol-Instruct is a novel method using LLMs instead of humans to automatically mass-produce open-domain instructions of various difficulty levels and skills range, to improve the performance of LLMs. You can easily embark on your own evolutionary journey with the Evol Script we provide.

The resources, including code, data, and model weights, associated with this project are restricted for academic research purposes only and cannot be used for commercial purposes. The content produced by any version of WizardLM is influenced by uncontrollable variables such as randomness, and therefore, the accuracy of the output cannot be guaranteed by this project. This project does not accept any legal liability for the content of the model output, nor does it assume responsibility for any losses incurred due to the use of associated resources and output results.