🐸TTS is a library for advanced Text-to-Speech generation. It's built on the latest research, was designed to achieve the best trade-off among ease-of-training, speed and quality. 🐸TTS comes with pretrained models, tools for measuring dataset quality and already used in 20+ languages for products and research projects.

📰 Subscribe to 🐸Coqui.ai Newsletter

📢 English Voice Samples and SoundCloud playlist

👩🏽🍳 TTS training recipes

📄 Text-to-Speech paper collection

Please use our dedicated channels for questions and discussion. Help is much more valuable if it's shared publicly, so that more people can benefit from it.

| Type | Platforms |

|---|---|

| 🚨 Bug Reports | GitHub Issue Tracker |

| ❔ FAQ | TTS/Wiki |

| 🎁 Feature Requests & Ideas | GitHub Issue Tracker |

| 👩💻 Usage Questions | Github Discussions |

| 🗯 General Discussion | Github Discussions or Gitter Room |

| Type | Links |

|---|---|

| 💾 Installation | TTS/README.md |

| 👩💻 Contributing | CONTRIBUTING.md |

| 👩🏾🏫 Tutorials and Examples | TTS/Wiki |

| 🚀 Released Models | TTS Releases and Experimental Models |

| 💻 Docker Image | Repository by @synesthesiam |

| 🖥️ Demo Server | TTS/server |

| 🤖 Synthesize speech | TTS/README.md |

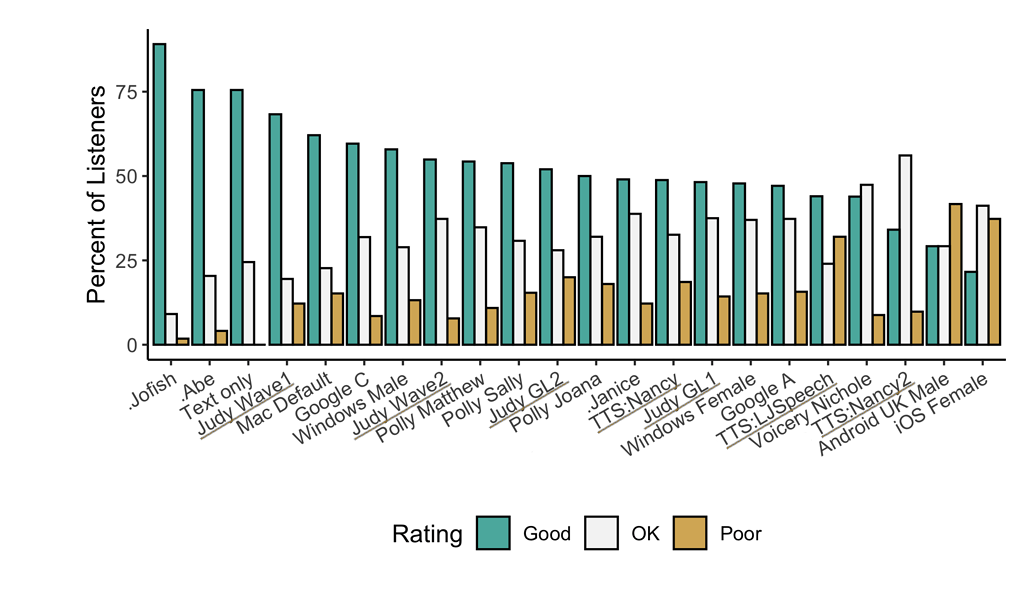

Underlined "TTS*" and "Judy*" are 🐸TTS models

- High performance Deep Learning models for Text2Speech tasks.

- Text2Spec models (Tacotron, Tacotron2, Glow-TTS, SpeedySpeech).

- Speaker Encoder to compute speaker embeddings efficiently.

- Vocoder models (MelGAN, Multiband-MelGAN, GAN-TTS, ParallelWaveGAN, WaveGrad, WaveRNN)

- Fast and efficient model training.

- Detailed training logs on console and Tensorboard.

- Support for multi-speaker TTS.

- Efficient Multi-GPUs training.

- Ability to convert PyTorch models to Tensorflow 2.0 and TFLite for inference.

- Released models in PyTorch, Tensorflow and TFLite.

- Tools to curate Text2Speech datasets under

dataset_analysis. - Demo server for model testing.

- Notebooks for extensive model benchmarking.

- Modular (but not too much) code base enabling easy testing for new ideas.

- Guided Attention: paper

- Forward Backward Decoding: paper

- Graves Attention: paper

- Double Decoder Consistency: blog

- Dynamic Convolutional Attention: paper

- MelGAN: paper

- MultiBandMelGAN: paper

- ParallelWaveGAN: paper

- GAN-TTS discriminators: paper

- WaveRNN: origin

- WaveGrad: paper

- HiFiGAN: paper

You can also help us implement more models. Some 🐸TTS related work can be found here.

🐸TTS is tested on Ubuntu 18.04 with python >= 3.6, < 3.9.

If you are only interested in synthesizing speech with the released 🐸TTS models, installing from PyPI is the easiest option.

pip install TTSIf you plan to code or train models, clone 🐸TTS and install it locally.

git clone https://github.com/coqui-ai/TTS

pip install -e .We use espeak-ng to convert graphemes to phonemes. You might need to install separately.

sudo apt-get install espeak-ngIf you are on Ubuntu (Debian), you can also run following commands for installation.

$ make system-deps # intended to be used on Ubuntu (Debian). Let us know if you have a diffent OS.

$ make installIf you are on Windows, 👑@GuyPaddock wrote installation instructions here.

|- notebooks/ (Jupyter Notebooks for model evaluation, parameter selection and data analysis.)

|- utils/ (common utilities.)

|- TTS

|- bin/ (folder for all the executables.)

|- train*.py (train your target model.)

|- distribute.py (train your TTS model using Multiple GPUs.)

|- compute_statistics.py (compute dataset statistics for normalization.)

|- convert*.py (convert target torch model to TF.)

|- tts/ (text to speech models)

|- layers/ (model layer definitions)

|- models/ (model definitions)

|- tf/ (Tensorflow 2 utilities and model implementations)

|- utils/ (model specific utilities.)

|- speaker_encoder/ (Speaker Encoder models.)

|- (same)

|- vocoder/ (Vocoder models.)

|- (same)

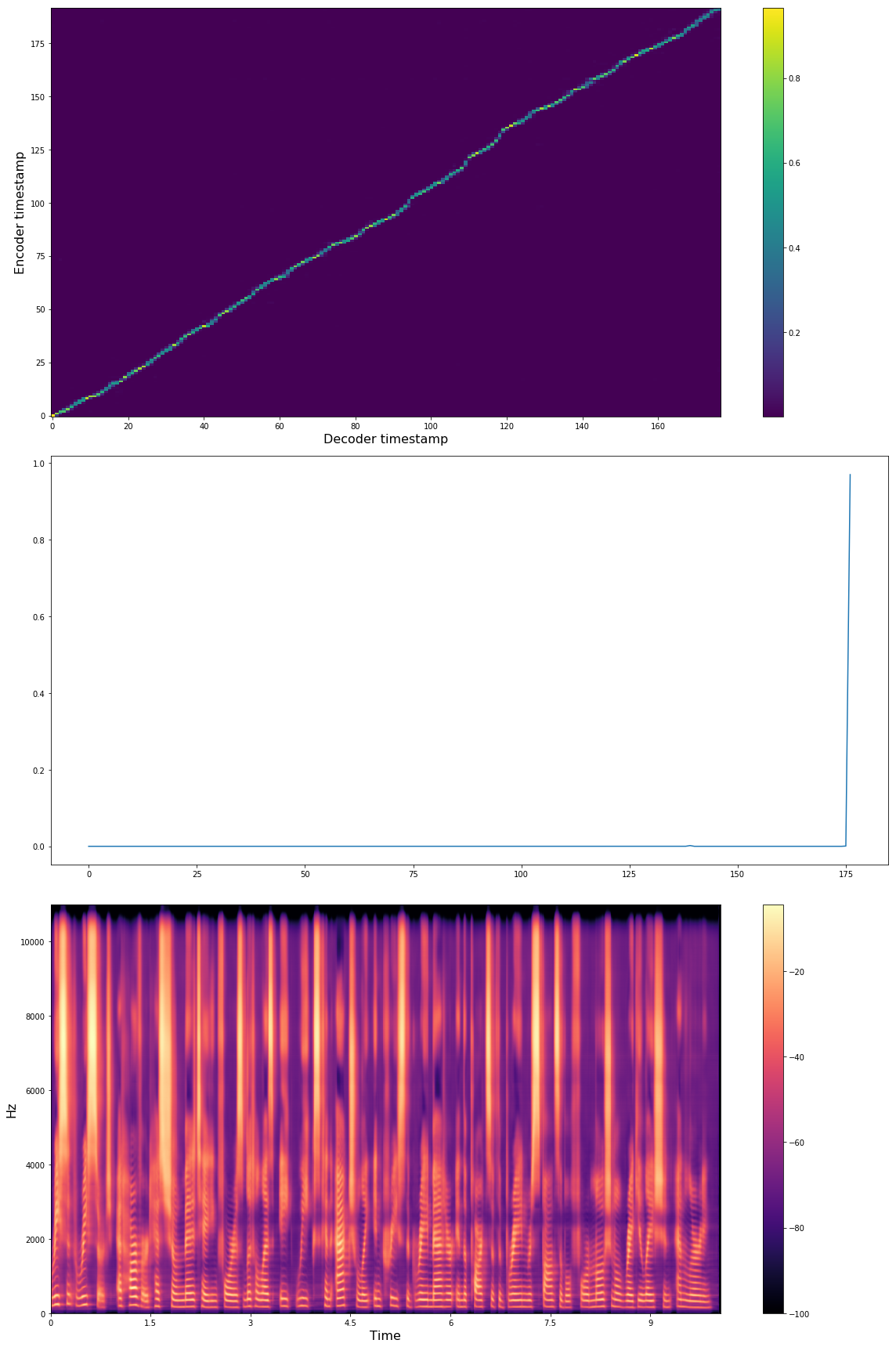

Below you see Tacotron model state after 16K iterations with batch-size 32 with LJSpeech dataset.

"Recent research at Harvard has shown meditating for as little as 8 weeks can actually increase the grey matter in the parts of the brain responsible for emotional regulation and learning."

Audio examples: soundcloud

🐸TTS provides a generic dataloader easy to use for your custom dataset.

You just need to write a simple function to format the dataset. Check datasets/preprocess.py to see some examples.

After that, you need to set dataset fields in config.json.

Some of the public datasets that we successfully applied 🐸TTS:

After the installation, 🐸TTS provides a CLI interface for synthesizing speech using pre-trained models. You can either use your own model or the release models under 🐸TTS.

Listing released 🐸TTS models.

tts --list_modelsRun a tts and a vocoder model from the released model list. (Simply copy and paste the full model names from the list as arguments for the command below.)

tts --text "Text for TTS" \

--model_name "<type>/<language>/<dataset>/<model_name>" \

--vocoder_name "<type>/<language>/<dataset>/<model_name>" \

--out_path folder/to/save/output.wavRun your own TTS model (Using Griffin-Lim Vocoder)

tts --text "Text for TTS" \

--model_path path/to/model.pth.tar \

--config_path path/to/config.json \

--out_path folder/to/save/output.wavRun your own TTS and Vocoder models

tts --text "Text for TTS" \

--config_path path/to/config.json \

--model_path path/to/model.pth.tar \

--out_path folder/to/save/output.wav \

--vocoder_path path/to/vocoder.pth.tar \

--vocoder_config_path path/to/vocoder_config.jsonNote: You can use ./TTS/bin/synthesize.py if you prefer running tts from the TTS project folder.

Here you can find a CoLab notebook for a hands-on example, training LJSpeech. Or you can manually follow the guideline below.

To start with, split metadata.csv into train and validation subsets respectively metadata_train.csv and metadata_val.csv. Note that for text-to-speech, validation performance might be misleading since the loss value does not directly measure the voice quality to the human ear and it also does not measure the attention module performance. Therefore, running the model with new sentences and listening to the results is the best way to go.

shuf metadata.csv > metadata_shuf.csv

head -n 12000 metadata_shuf.csv > metadata_train.csv

tail -n 1100 metadata_shuf.csv > metadata_val.csv

To train a new model, you need to define your own config.json to define model details, trainin configuration and more (check the examples). Then call the corressponding train script.

For instance, in order to train a tacotron or tacotron2 model on LJSpeech dataset, follow these steps.

python TTS/bin/train_tacotron.py --config_path TTS/tts/configs/config.jsonTo fine-tune a model, use --restore_path.

python TTS/bin/train_tacotron.py --config_path TTS/tts/configs/config.json --restore_path /path/to/your/model.pth.tarTo continue an old training run, use --continue_path.

python TTS/bin/train_tacotron.py --continue_path /path/to/your/run_folder/For multi-GPU training, call distribute.py. It runs any provided train script in multi-GPU setting.

CUDA_VISIBLE_DEVICES="0,1,4" python TTS/bin/distribute.py --script train_tacotron.py --config_path TTS/tts/configs/config.jsonEach run creates a new output folder accomodating used config.json, model checkpoints and tensorboard logs.

In case of any error or intercepted execution, if there is no checkpoint yet under the output folder, the whole folder is going to be removed.

You can also enjoy Tensorboard, if you point Tensorboard argument--logdir to the experiment folder.

- https://github.com/keithito/tacotron (Dataset pre-processing)

- https://github.com/r9y9/tacotron_pytorch (Initial Tacotron architecture)

- https://github.com/kan-bayashi/ParallelWaveGAN (GAN based vocoder library)

- https://github.com/jaywalnut310/glow-tts (Original Glow-TTS implementation)

- https://github.com/fatchord/WaveRNN/ (Original WaveRNN implementation)

- https://arxiv.org/abs/2010.05646 (Original HiFiGAN implementation)